You might overlook PCIe bandwidth, but it’s often the biggest bottleneck in AI servers, limiting data transfer between components more than processing power. Insufficient lanes or outdated standards slow down neural network training and data flow, hindering overall performance. As workloads grow, proper PCIe scaling becomes essential to sustain speed and efficiency. Understanding this hidden constraint can help you optimize your setup—keep going to discover how to address this critical issue effectively.

Key Takeaways

- PCIe bandwidth often underestimates the actual data transfer needs of AI workloads, leading to performance bottlenecks.

- Insufficient PCIe lanes or outdated standards can limit data flow between GPUs, CPUs, and storage, hindering scalability.

- Proper PCIe sizing is critical for seamless integration of quantum computing components and hybrid AI architectures.

- Future AI and quantum applications demand higher PCIe standards (e.g., PCIe 5.0/6.0) to prevent performance degradation.

- Many systems overlook PCIe scalability, risking latency spikes and reduced throughput in growing data center and AI environments.

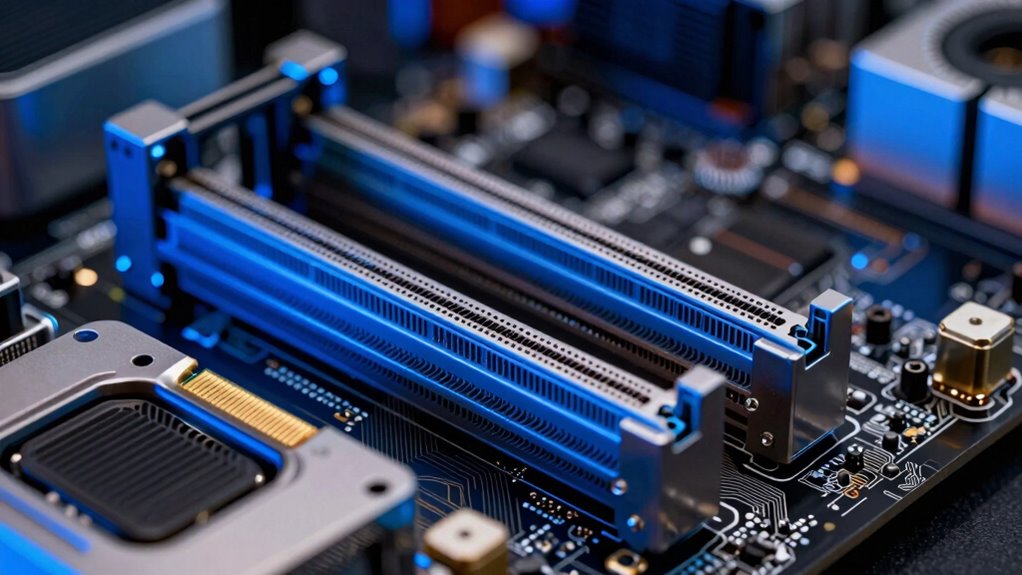

As AI servers handle increasingly complex workloads, ensuring sufficient PCIe bandwidth becomes critical for peak performance. You might think that CPU speed or GPU power are the main limits, but the data flow between components often becomes the bottleneck. When you’re working with high-throughput tasks, such as training large neural networks or deploying real-time AI models, the speed of data transfer across PCIe lanes directly impacts overall efficiency. If your PCIe bandwidth isn’t scaled properly, data transfer delays can throttle your system’s throughput, regardless of how powerful your GPUs or accelerators are.

In the realm of quantum computing, where the integration with classical data centers is an emerging frontier, PCIe bandwidth plays a surprisingly vital role. Quantum processors, still in early development, need seamless data exchange with traditional servers for tasks like error correction and hybrid algorithms. As these quantum components become part of data center infrastructure, the demand for high-bandwidth PCIe connections grows. You need to consider how PCIe can support not just current AI workloads but also future quantum computing integrations, which require rapid data movement between classical and quantum hardware. This integration raises the bar for PCIe standards, pushing for wider lanes and faster generations to meet the hybrid computing needs. Additionally, the evolution of data transfer speeds directly influences how effectively hybrid systems can operate. Incorporating emerging interconnect technologies can further optimize data flow, ensuring systems remain performant as demands increase.

Data center integration emphasizes the importance of scalable, reliable PCIe infrastructure. Your data center must support high-speed interconnects that facilitate efficient communication between servers, storage, and accelerators. PCIe’s role isn’t limited to internal component connections anymore; it extends to network fabrics and external peripherals, making it a backbone for data center operations. If you neglect bandwidth scaling, your system risks bottlenecks that can cause latency spikes and reduce overall throughput. Properly sizing PCIe lanes and adopting newer standards like PCIe 5.0 or PCIe 6.0 ensures that your data center can handle the massive data flows typical in AI workloads, especially as datasets grow larger and models become more complex.

Ultimately, you need to stay ahead by understanding that PCIe bandwidth isn’t just a technical detail; it’s a strategic component of your AI infrastructure. As workloads grow and new technologies like quantum computing become part of your ecosystem, ensuring your PCIe lanes are adequately provisioned and future-proofed is essential. Not doing so risks performance degradation and limits your capacity to scale. Investing in appropriate PCIe infrastructure means you’re better prepared for the demands of today’s AI applications and the innovations of tomorrow, keeping your systems efficient, responsive, and ready for the evolution of data center integration. Recognizing the importance of high-speed data transfer and its impact on overall system performance is crucial for future-proofing your infrastructure.

ASUS Hyper M.2 x16 Gen5 Card (PCIe 5.0/4.0) Supports Four NVMe M.2 (2242/2260/2280/22110) Devices up to 512 Gbps for AMD and Intel® Platform RAID Functions.

Adapted server-grade PCB supports up to four PCIe 5.0/4.0 M.2 drives, with up to 512 Gbps bandwidth for…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Does PCIE Bandwidth Impact AI Model Training Speed?

PCIe bandwidth directly impacts your AI model training speed by affecting data transfer rates between hardware components. When bandwidth is limited, data moves slower, causing delays in processing and reducing overall efficiency. To optimize hardware, you need to guarantee sufficient PCIe bandwidth, allowing seamless data transfer, minimizing bottlenecks, and boosting training performance. Proper hardware optimization involves selecting the right PCIe configurations to maximize data flow during intensive AI workloads.

What Are the Latest PCIE Standards for AI Servers?

You should know that the latest PCIe standards for AI servers include PCIe 5.0 and PCIe 6.0, which represent significant PCIe evolution. These data transfer protocols boost bandwidth, enabling faster communication between components like GPUs and CPUs. PCIe 5.0 offers double the bandwidth of PCIe 4.0, while PCIe 6.0 further doubles it, helping you maximize AI training speeds and reduce bottlenecks in data transfer.

Can PCIE Bottlenecks Limit Future AI Hardware Upgrades?

Imagine PCIe bottlenecks as gatekeepers, blocking your AI hardware upgrades like quantum computing or data compression innovations. Yes, these bottlenecks can limit your progress, forcing you to wait for bandwidth improvements before accessing new technology. As AI demands grow, upgrading PCIe standards becomes critical. Without addressing this, future hardware advancements risk being hamstrung, delaying breakthroughs in AI performance and capabilities.

How to Measure Actual PCIE Bandwidth in a Server?

You can measure actual PCIe bandwidth in a server by running benchmarking tools like CrystalDiskMark or fio, which test data transfer rates directly. Keep an eye on storage latency and power consumption during these tests, as they impact performance. Monitoring tools such as NVIDIA’s SMI or hardware analyzers help track real-time bandwidth, helping you identify bottlenecks and optimize your server’s PCIe configuration for better AI hardware upgrades.

Are There Alternatives to PCIE for High-Bandwidth AI Data Transfer?

Like a lightning bolt tearing through the sky, quantum networking offers a promising alternative to PCIe for high-bandwidth AI data transfer. It leverages entanglement and superposition, enabling near-instantaneous communication over long distances. While still in development, these innovative protocols could surpass traditional PCIe speeds, providing a future-proof solution. Keep an eye on emerging research, as quantum networking might revolutionize data transfer for AI servers sooner than expected.

TRYX String PCIE 5.0 x 16 Riser Cable 200mm 128GB/s Vertical Graphic Card/GPU Mount Flexible PCIE 4.0 Support Near-Zero Signal Loss with EMI Shield for RTX 4090/5070/5080/5090RX 9070XT/7900XT

PCIE 5.0 X 16 RISER CABLE – TRYX STRING riser cable 200mm supports up to 128GB/s bidirectional bandwidth,…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

Think of PCIe bandwidth as the essential bridge connecting your AI components, much like the Thames linking London’s heart to its outskirts. If it’s too narrow or congested, your data stalls, and performance suffers—akin to a city gridlocked in rush hour. To keep your AI fleet moving at full speed, you need to size this bandwidth correctly, ensuring your servers flow smoothly, like a well-oiled metropolis, where every byte reaches its destination without delay.

ASUS Pro WS B850M-ACE SE AMD AM5 B850 mATX MicroATX Business motherboard, PCIe 5.0 x 16, DDR5, 2x PCIe 5.0 M.2, PCIe 5.0 MCIO, U.2, 10G & 2.5G LAN, USB4®, ASUS Control Center Express Remote Management

Ready for Advanced AI PCs: Built to power next-gen AI workloads with robust performance, ultrafast connectivity, and future-proof…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

COMeap 8 Pin PCIe Splitter Cable, GPU VGA PCIe Female to Dual 8 Pin (6+2) Male PCI Express Power Adapter Braided Extension 9 inches (23cm)

『8 Pin PCIe Splitter Cable』Convenient solution for adding two extra PCI Express ports to your existing power supply,…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.