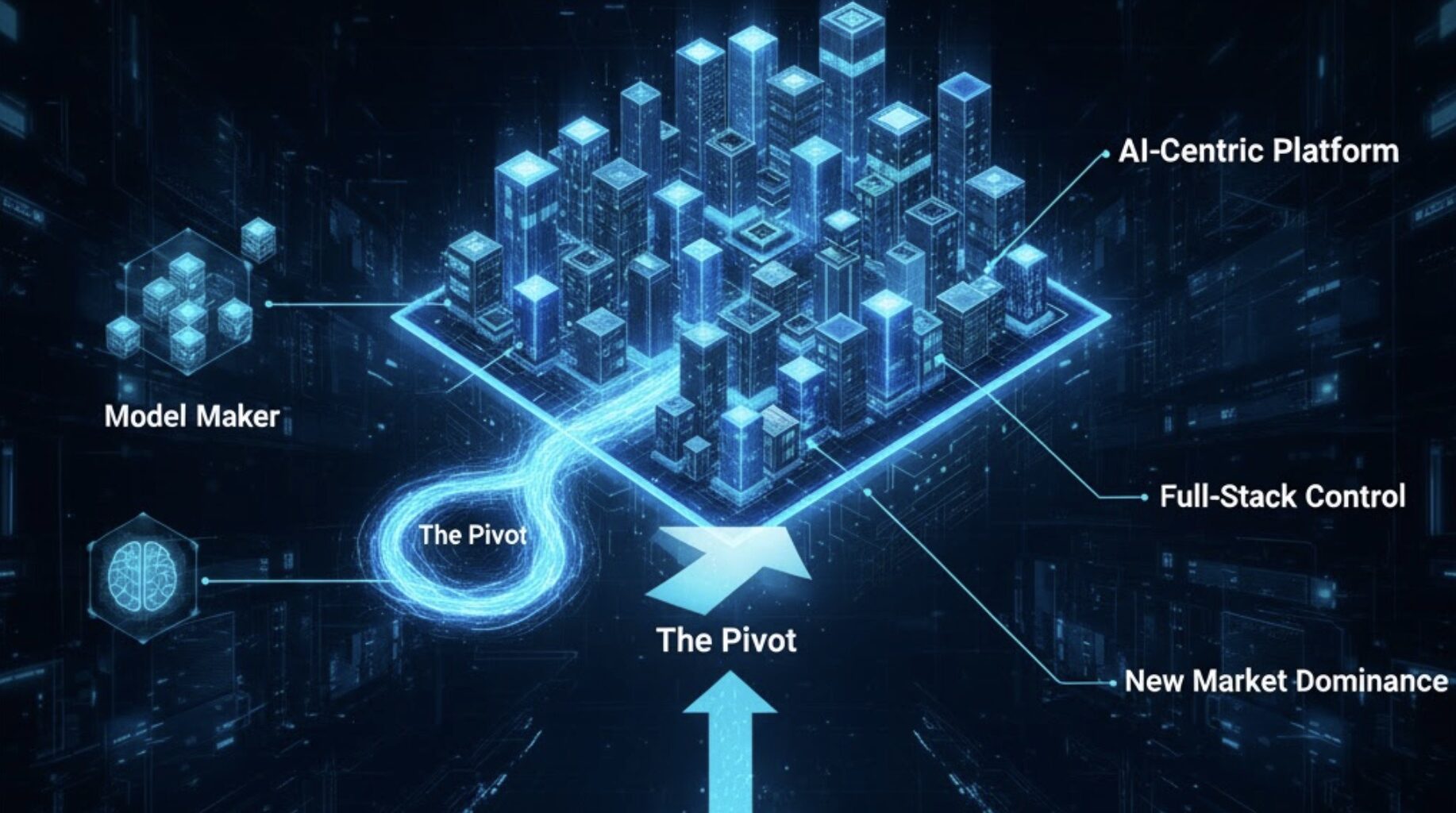

StrongMocha Quick Take — OpenAI is gearing up to become a full‑blown hosting and cloud infrastructure provider—an AI‑first hyperscaler that could redraw the competitive map now dominated by AWS, Azure, and Google Cloud. The strategy hinges on owning the stack: massive AI datacenters, tight hardware tie‑ups (think NVIDIA and AMD), and deep capacity partners like Oracle—often discussed under the “Stargate” banner. The result? More control over compute, faster iteration cycles, and new leverage in how OpenAI partners with (and sometimes competes against) today’s cloud giants.

What to watch

- Partnership calculus: Microsoft remains a close ally, but multi‑cloud and self‑run capacity shift the balance of power.

- Hardware pipeline: NVIDIA + AMD deals aim to lock in next‑gen accelerators at unprecedented scale.

- Economics: Trading cloud “rent” for capex could lower unit costs and open a premium AI hosting business.

- Europe play: Sovereign‑cloud requirements and strict compliance regimes create both friction and first‑mover advantages for providers who localize AI infrastructure.

CTA: Read more on the strategic implications, partner dynamics, and Europe’s sovereignty angle here:

👉 Read more on: https://thorstenmeyerai.com/insights/openais-move-into-cloud-hosting-impact-on-partnerships-and-market-landscape/

NVIDIA 5GB nVIDIA Tesla K20 GPU Server Accelerator 900-22081-0010-000 (Renewed)

Item Package Dimension -14.7L X 8.8W X 3.4H Inches

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

AMD Ryzen 5 5500 6-Core, 12-Thread Unlocked Desktop Processor with Wraith Stealth Cooler

Can deliver fast 100 plus FPS performance in the world's most popular games, discrete graphics card required

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

VMware VSphere 8 Simplified: A Beginner’s Guide to Virtualization with ESXi 8, vCenter, and Modern VMware Cloud Infrastructure (vSphere Foundation & Enterprise Concepts)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

AI hosting data center equipment

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.